This article shows the CPU-only inference with a modern server processor – AMD Epyc 9554. For the LLM model the Meta’s Llama 4 Scout 17B 16E with different quantization are used to show the difference in token generation per second and memory consumption. Llama 4 Scout 17B 16E Instruct is a pretty solid LLM model, which is a MoE type (Mixture of Experts) and offers significant upgrade to LLama 3 models. It is published by the Meta giant, and in many cases it can be considered to offload some LLM work locally for free (Math, IT, general use – text and image understanding). The total parameters are 107.77B with 17B activated and with 16 activated experts. The interesting thing about this model is the context window length of 10 millions tokens, which is significantly more than the others – around 130 000 tokens. The article is focused only showing the benchmark of the LLM tokens generations per second and there are other papers on the quality of the output for the different quantized version.

The testing bench is:

- Single socket AMD EPYC 9554 CPU – 64 core CPU / 128 threads

- 196GB RAM in 12 channel, all 12 CPU channels are populated with 16GB DDR5 5600MHz Samsung.

- ASUS K14PA-U24-T Series motherboard

- Testing with LLAMA.CPP – llama-bench

- theoretical memory bandwidth 460.8 GB/s (according to the official documents form AMD)

- the context window is the default 4K of the llama-bench tool. The memory consumption could vary greatly if context window is increased.

- More information for the setup and benchmarks – LLM inference benchmarks with llamacpp and AMD EPYC 9554 CPU

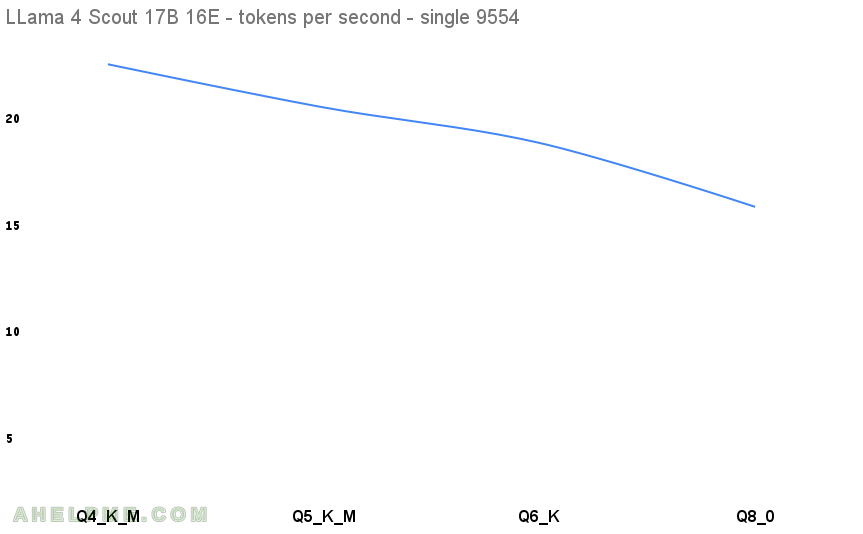

Here are the results. The first benchmark test is Q4 and is used as a baseline for the diff column below, because Q4 are really popular and they offer a good quality and really small footprint related to the full sized model version.

| N | model | parameters | quantization | memory | diff t/s % | tokens/s |

|---|---|---|---|---|---|---|

| 1 | Qwen3 32B | 32B | Q4_K_M | 60.86 GiB | 0 | 21.992 |

| 2 | Qwen3 32B | 32B | Q5_0 | 71.28 GiB | 9.18 | 19.972 |

| 3 | Qwen3 32B | 32B | Q6_K | 82.35 GiB | 8.32 | 18.31 |

| 4 | Qwen3 32B | 32B | Q8_0 | 106.65 GiB | 16.35 | 15.316 |

The difference between the Q4 and Q8 in the tokens per second is 30.35% speed degradation and even the Q8 is fully usable with tokens generation around 15 tokens per second. Around 15 tokens per second is good and usable for daily use for a single user, which is what the CPU inference would offer.

Here are all the tests output:

1. Llama 4 Scout 17B 16E Instruct Q4_K_M

Using unsloth Llama-4-Scout-17B-16E-Instruct-Q4_K_M-00001-of-00002.gguf and Llama-4-Scout-17B-16E-Instruct-Q4_K_M-00002-of-00002.gguf files.

/root/llama.cpp/build/bin/llama-bench --numa distribute -t 64 -p 0 -n 128,256,512,1024,2048 -m /root/models/tests/Llama-4-Scout-17B-16E-Instruct-Q4_K_M-00001-of-00002.gguf | model | size | params | backend | threads | test | t/s | | ------------------------------------ | ---------: | ---------: | ---------- | ------: | --------------: | -------------------: | | llama4 17Bx16E (Scout) Q4_K - Medium | 60.86 GiB | 107.77 B | BLAS,RPC | 64 | tg128 | 22.55 ± 0.01 | | llama4 17Bx16E (Scout) Q4_K - Medium | 60.86 GiB | 107.77 B | BLAS,RPC | 64 | tg256 | 22.43 ± 0.03 | | llama4 17Bx16E (Scout) Q4_K - Medium | 60.86 GiB | 107.77 B | BLAS,RPC | 64 | tg512 | 22.28 ± 0.02 | | llama4 17Bx16E (Scout) Q4_K - Medium | 60.86 GiB | 107.77 B | BLAS,RPC | 64 | tg1024 | 21.84 ± 0.04 | | llama4 17Bx16E (Scout) Q4_K - Medium | 60.86 GiB | 107.77 B | BLAS,RPC | 64 | tg2048 | 20.86 ± 0.01 | build: 66625a59 (6040)

2. Llama 4 Scout 17B 16E Instruct Q5_K_M

Using unsloth Llama-4-Scout-17B-16E-Instruct-Q5_K_M-00001-of-00002.gguf and Llama-4-Scout-17B-16E-Instruct-Q5_K_M-00002-of-00002.gguf files.

/root/llama.cpp/build/bin/llama-bench --numa distribute -t 64 -p 0 -n 128,256,512,1024,2048 -m /root/models/tests/Llama-4-Scout-17B-16E-Instruct-Q5_K_M-00001-of-00002.gguf | model | size | params | backend | threads | test | t/s | | ------------------------------------ | ---------: | ---------: | ---------- | ------: | --------------: | -------------------: | | llama4 17Bx16E (Scout) Q5_K - Medium | 71.28 GiB | 107.77 B | BLAS,RPC | 64 | tg128 | 20.54 ± 0.01 | | llama4 17Bx16E (Scout) Q5_K - Medium | 71.28 GiB | 107.77 B | BLAS,RPC | 64 | tg256 | 20.39 ± 0.01 | | llama4 17Bx16E (Scout) Q5_K - Medium | 71.28 GiB | 107.77 B | BLAS,RPC | 64 | tg512 | 20.16 ± 0.01 | | llama4 17Bx16E (Scout) Q5_K - Medium | 71.28 GiB | 107.77 B | BLAS,RPC | 64 | tg1024 | 19.82 ± 0.01 | | llama4 17Bx16E (Scout) Q5_K - Medium | 71.28 GiB | 107.77 B | BLAS,RPC | 64 | tg2048 | 18.95 ± 0.03 | build: 66625a59 (6040)

3. Llama 4 Scout 17B 16E Instruct Q6_K

Using unsloth Llama-4-Scout-17B-16E-Instruct-Q6_K-00001-of-00002.gguf and Llama-4-Scout-17B-16E-Instruct-Q6_K-00002-of-00002.gguf files.

/root/llama.cpp/build/bin/llama-bench --numa distribute -t 64 -p 0 -n 128,256,512,1024,2048 -m /root/models/tests/Llama-4-Scout-17B-16E-Instruct-Q6_K-00001-of-00002.gguf | model | size | params | backend | threads | test | t/s | | ------------------------------ | ---------: | ---------: | ---------- | ------: | --------------: | -------------------: | | llama4 17Bx16E (Scout) Q6_K | 82.35 GiB | 107.77 B | BLAS,RPC | 64 | tg128 | 18.79 ± 0.00 | | llama4 17Bx16E (Scout) Q6_K | 82.35 GiB | 107.77 B | BLAS,RPC | 64 | tg256 | 18.65 ± 0.01 | | llama4 17Bx16E (Scout) Q6_K | 82.35 GiB | 107.77 B | BLAS,RPC | 64 | tg512 | 18.46 ± 0.00 | | llama4 17Bx16E (Scout) Q6_K | 82.35 GiB | 107.77 B | BLAS,RPC | 64 | tg1024 | 18.19 ± 0.00 | | llama4 17Bx16E (Scout) Q6_K | 82.35 GiB | 107.77 B | BLAS,RPC | 64 | tg2048 | 17.46 ± 0.01 | build: 66625a59 (6040)

4. Llama 4 Scout 17B 16E Instruct Q8_0

Using unsloth Llama-4-Scout-17B-16E-Instruct-Q8_0-00001-of-00003.gguf, Llama-4-Scout-17B-16E-Instruct-Q8_0-00002-of-00003.gguf and Llama-4-Scout-17B-16E-Instruct-Q8_0-00003-of-00003.gguf files.

/root/llama.cpp/build/bin/llama-bench --numa distribute -t 64 -p 0 -n 128,256,512,1024,2048 -m /root/models/tests/Llama-4-Scout-17B-16E-Instruct-Q8_0-00001-of-00003.gguf | model | size | params | backend | threads | test | t/s | | ------------------------------ | ---------: | ---------: | ---------- | ------: | --------------: | -------------------: | | llama4 17Bx16E (Scout) Q8_0 | 106.65 GiB | 107.77 B | BLAS,RPC | 64 | tg128 | 15.63 ± 0.00 | | llama4 17Bx16E (Scout) Q8_0 | 106.65 GiB | 107.77 B | BLAS,RPC | 64 | tg256 | 15.54 ± 0.02 | | llama4 17Bx16E (Scout) Q8_0 | 106.65 GiB | 107.77 B | BLAS,RPC | 64 | tg512 | 15.42 ± 0.00 | | llama4 17Bx16E (Scout) Q8_0 | 106.65 GiB | 107.77 B | BLAS,RPC | 64 | tg1024 | 15.24 ± 0.00 | | llama4 17Bx16E (Scout) Q8_0 | 106.65 GiB | 107.77 B | BLAS,RPC | 64 | tg2048 | 14.75 ± 0.00 | build: 66625a59 (6040)

Before all tests the cleaning cache commands were executed:

echo 0 > /proc/sys/kernel/numa_balancing echo 3 > /proc/sys/vm/drop_caches